Infrabase.ai

The Story

AI Overview

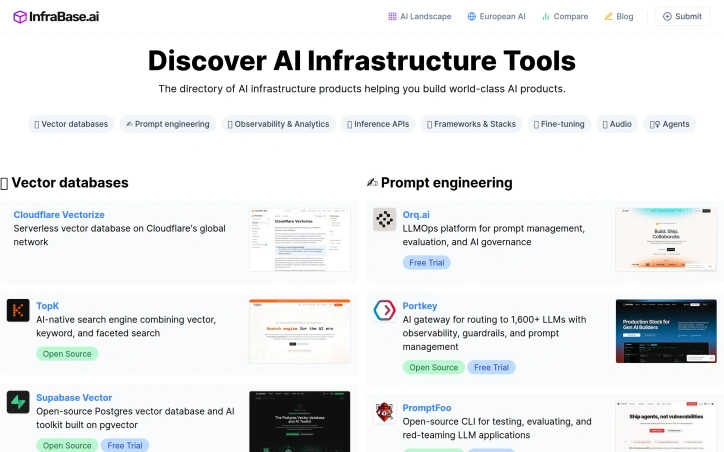

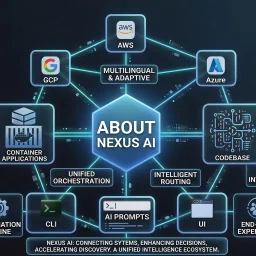

AI-generatedThe directory serves builders deciding which AI infrastructure components to adopt: founders prototyping at seed stage, engineering teams scaling inference and observability, and architects selecting vector database solutions. The categories span the full infrastructure stack, from foundational services like vectorization and embedding APIs to higher-order tools for prompt management, agent monitoring, and evaluation frameworks.

What distinguishes Infrabase from generic tool aggregators is the specificity of its curation. Each category contains substantive options rather than purely aspirational listings. The directory emphasizes practical attributes: it flags open-source projects alongside commercial offerings, marks free trial availability, and acknowledges the diversity of deployment models—serverless, self-hosted, EU-sovereign—relevant to different organizational constraints. This matters because infrastructure decisions often turn on operational characteristics like data residency and cost scaling, not just feature parity.

The founder built Infrabase from direct experience evaluating infrastructure for a real project, accumulating working lists of products and technical notes substantial enough to justify sharing. This origin explains the site's practical bias. Rather than listing every tangential tool, it focuses on products that demonstrably function within specific categories. The selection acknowledges that the AI infrastructure market extends far beyond dominant cloud providers, a reality that reshapes purchasing power for teams taking AI seriously.

The directory's limitations stem from its breadth. With sixty-one inference APIs, twenty vector databases, and comparable volumes across categories, individual product comparisons flatten into metadata. Users cannot evaluate full feature matrices, benchmark results, or integration patterns within the directory itself. The site succeeds by redirecting focus to vendor pages rather than attempting comprehensive comparison. For teams in early evaluation stages this works appropriately; for detailed diligence it points the right direction without replacing specialized analysis.

Key Features

Consolidated Directory

Aggregates dozens of AI infrastructure vendors into a single organized database by functional category.

Category-Based Organization

Structures tools into domains including vector databases, prompt engineering, observability platforms, and inference APIs.

Practical Curation

Flags open-source projects alongside commercial offerings and marks free trial availability and diverse deployment models.

Infrastructure Stack Coverage

Spans foundational services like vectorization APIs through advanced tools for prompt management and agent monitoring.

Operational Focus

Emphasizes practical attributes including data residency, cost scaling, and vendor-neutral selection beyond dominant cloud providers.

Use Cases

-

1

Seed-stage Founders

Prototyping AI products requires evaluating multiple infrastructure components without researching dozens of specialized vendors separately.

-

2

Engineering Teams Scaling

Teams managing inference and observability benefit from understanding available options across deployment models and cost structures.

-

3

Enterprise Architects

Selecting vector databases and infrastructure hinges on operational characteristics like data residency and cost scaling relevant to organizational constraints.

-

4

Early-stage Stack Assembly

Reducing friction when evaluating a complete AI tech stack by consulting a curated consolidated directory.

FAQ

What categories of AI infrastructure tools does Infrabase cover? ▾

Does Infrabase include open-source and self-hosted options? ▾

Can I compare detailed features and benchmarks within Infrabase? ▾

Tech Stack & Tags

Discussion

No comments yet — be the first!

Join the conversation — sign up to comment.

Sign up free