#translation Startups & Tools

Discover the best translation startups, tools, and products on SellWithBoost.

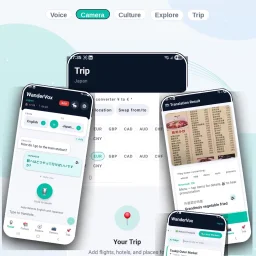

Traveling to foreign destinations can be a daunting experience, especially when language barriers hinder communication and navigation. WanderVox addresses this issue head-on, catering to travelers seeking to immerse themselves in local cultures. At its core, the app solves the problem of language barriers, enabling users to communicate effectively and discover hidden gems that often elude tourists. What stands out about WanderVox is its comprehensive approach to travel facilitation. It integrates multiple features that work together seamlessly to provide a holistic travel experience. The app's voice translation capability allows for instant speech-to-speech translation in 49 languages, facilitating real-time conversations with locals. The smart camera feature uses AI to detect and translate text from images, making it easier to understand menus, signs, and documents. The app's cultural guidance is another notable aspect, offering users essential local phrases, etiquette tips, and cultural insights for 51 destinations. This information is cached offline, ensuring access even without a signal. Additionally, the AI-curated local recommendations surface hyper-local suggestions, such as street food stalls and secret viewpoints, that are tailored to the user's location. WanderVox also includes a trip planner that organizes bookings, places, and reminders into a dated itinerary, complete with live currency conversion and booking reminders. Notably, Android users can translate typed text offline via on-device ML Kit, although voice and camera translation require a connection. The app is free to start, with availability on Google Play and a promised iOS release. By providing a robust set of features that cater to various aspects of travel, WanderVox positions itself as a valuable companion for travelers seeking an authentic experience.

Evaluating AI infrastructure tools sprawls across dozens of specialized vendors, pricing models, and documentation sites, creating significant friction for teams assembling their tech stack. Infrabase.ai consolidates this fragmentation into a single directory organized by functional category—vector databases, prompt engineering tools, observability platforms, inference APIs, and more—making it possible to compare options within each domain without hunting across the web. The directory serves builders deciding which AI infrastructure components to adopt: founders prototyping at seed stage, engineering teams scaling inference and observability, and architects selecting vector database solutions. The categories span the full infrastructure stack, from foundational services like vectorization and embedding APIs to higher-order tools for prompt management, agent monitoring, and evaluation frameworks. What distinguishes Infrabase from generic tool aggregators is the specificity of its curation. Each category contains substantive options rather than purely aspirational listings. The directory emphasizes practical attributes: it flags open-source projects alongside commercial offerings, marks free trial availability, and acknowledges the diversity of deployment models—serverless, self-hosted, EU-sovereign—relevant to different organizational constraints. This matters because infrastructure decisions often turn on operational characteristics like data residency and cost scaling, not just feature parity. The founder built Infrabase from direct experience evaluating infrastructure for a real project, accumulating working lists of products and technical notes substantial enough to justify sharing. This origin explains the site's practical bias. Rather than listing every tangential tool, it focuses on products that demonstrably function within specific categories. The selection acknowledges that the AI infrastructure market extends far beyond dominant cloud providers, a reality that reshapes purchasing power for teams taking AI seriously. The directory's limitations stem from its breadth. With sixty-one inference APIs, twenty vector databases, and comparable volumes across categories, individual product comparisons flatten into metadata. Users cannot evaluate full feature matrices, benchmark results, or integration patterns within the directory itself. The site succeeds by redirecting focus to vendor pages rather than attempting comprehensive comparison. For teams in early evaluation stages this works appropriately; for detailed diligence it points the right direction without replacing specialized analysis.

Streaming content across borders often creates a subtitle problem: foreign-language shows either come with no English subtitles, or viewers miss the challenge of engaging with original-language dialogue. Netflix Live Translator solves this by intercepting Netflix subtitles in real-time and replacing them with translations in any of 106 languages, letting viewers watch without missing dialogue or context. The extension targets language learners, international viewers, and anyone seeking content access beyond what Netflix's built-in subtitle options provide. What distinguishes this tool from other subtitle translation extensions is its architecture: it runs entirely in the browser with no backend server, no account creation, and no data collection. The developer has committed to privacy by design—your API key never leaves your browser and only communicates directly with Google's translation API. The workflow is deliberately minimal. Users select source and target languages from a popup, and the extension automatically detects subtitles on screen, translates them via Google Cloud, and replaces the originals instantly. A caching system prevents redundant API calls for repeated subtitle lines, reducing both latency and translation costs. The economic model relies on users bringing their own Google Cloud credentials. Google's free tier provides 500,000 characters per month—approximately sixteen feature-length films—enough for casual viewers at no cost. With only ten reported users and no ratings on the Chrome Web Store, Netflix Live Translator remains a niche utility. The extension launched in February 2026 and carries minimal friction for adoption: installation requires only a straightforward API key setup, which the developer guides users through directly in the interface. The developer operates it as a free project funded by optional donations, signaling this is more passion project than commercial venture. For viewers frustrated by subtitle limitations on Netflix or language learners seeking immersive practice, the tool addresses a genuine gap. Its browser-native architecture avoids the privacy and latency concerns of server-dependent translators, and the zero-cost base model removes financial barriers for eligible users. The main constraint is dependency on Google Cloud's free tier—once exhausted, users must fund their own API calls—but for casual use, the offering remains practical.

Breaking down language barriers during real-time conversations has long been a friction point for globally distributed teams, and Audilate directly addresses this challenge. The platform combines AI-powered speech transcription with simultaneous translation across over 100 languages, making it a practical solution for organizations where meetings, interviews, and collaborative discussions frequently span multiple geographies and language groups. The core value proposition centers on eliminating the lag and complexity that typically come with asynchronous translation workflows. Rather than recording conversations and processing them after the fact, Audilate delivers live transcription and translation, allowing participants to collaborate without stopping to manage language gaps. This is particularly relevant for companies hiring internationally, conducting cross-border partnerships, or operating distributed teams where English is not universally spoken as a first language. What distinguishes the product is its breadth of language support. With coverage across 100+ languages, the platform moves beyond serving just major language pairs and opens functionality to teams working in less commonly supported languages. This scope suggests the founders recognize that global collaboration extends well beyond English-to-Spanish or English-to-Mandarin scenarios. The integration of transcription and translation in a single workflow is also noteworthy—separate tools for these functions create unnecessary switching costs and synchronization challenges. The positioning emphasizes real-time processing, which is critical for the use cases mentioned. Whether facilitating a live meeting between team members in different countries, conducting remote interviews with international candidates, or enabling seamless cross-border conversations, the speed at which transcription and translation occur directly impacts usability. Delays of even a few seconds can derail natural conversation flow. The product targets organizations serious about global teamwork, particularly those for whom language support has become a competitive advantage or operational necessity. This includes multinational corporations, international service providers, distributed startups, and any team conducting work across language boundaries on a regular basis. The emphasis on meetings and interviews suggests the founders see their strongest initial adoption among HR, engineering, and business development functions that routinely conduct cross-language conversations. One practical consideration for potential users is how the platform integrates with existing communication infrastructure—meetings apps, video conferencing tools, and collaboration platforms—though those implementation details fall outside the scope of what's presented here. The foundational premise, however, is sound: removing language as a barrier to real-time collaboration remains a genuine problem for many organizations.