prompt-ctl.com

The Story

AI Overview

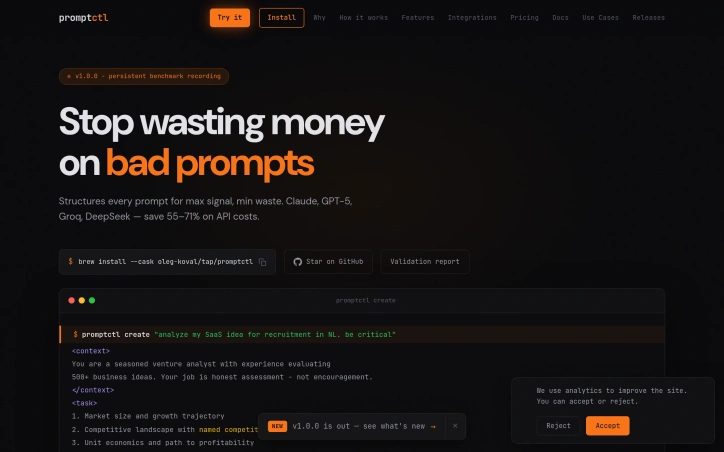

AI-generatedThe core insight is straightforward—most prompt failures stem from ambiguity, not capability. Rather than relying on an LLM to fix poorly articulated requests, Promptctl applies established prompting best practices (personas, constraints, structured output formats) automatically, locally, with no API calls required. The tool classifies user input against eleven task categories, automatically assigns expert personas and output structures, and formats everything into XML-tagged, decomposed instructions ready to execute.

What distinguishes Promptctl from generic prompt-improvement services is its emphasis on cost visibility and developer workflow integration. The tool supports direct comparison across ten major models including Claude Sonnet, GPT-5 variants, Llama, DeepSeek, and Groq, showing which delivers the best value before any request executes. Cost tracking happens natively; users can send prompts directly through Promptctl, pipe them to the Claude CLI, or copy them for independent use.

The engineering is cleanly executed. Promptctl ships as a single compiled binary with no dependencies—no Node.js, Python, or Docker overhead. Homebrew installation works across macOS (Intel and Apple Silicon), Linux, and Windows. Prompt generation happens instantly, deterministically, without external API calls or latency.

The product claims that well-structured prompts cost roughly one-third as much as unstructured alternatives per call, with potential total savings of 55 to 71 percent depending on model selection and workload. These benchmarks are stated as validated across ten models. The tool targets developers and teams that use LLMs as production infrastructure and have direct visibility into API spending.

Promptctl occupies a narrow but defensible position: it solves a genuine cost problem for a specific audience without feature sprawl. The focus remains laser-focused on three core capabilities—structure prompts efficiently, compare model costs transparently, and reduce token waste through better composition. No pricing or business model details are disclosed.

Key Features

Rule-Based Optimization

Converts natural language intent into structured, optimized prompts using established best practices without API calls

Task Classification Engine

Automatically classifies input against eleven task categories and assigns expert personas with XML-tagged, decomposed instructions

Model Cost Comparison

Compares execution costs across ten major models including Claude Sonnet, GPT-5, Llama, DeepSeek, and Groq before running

Zero-Dependency Binary

Ships as a single compiled binary installable via Homebrew across macOS, Linux, and Windows with no external dependencies

Native Cost Tracking

Tracks API costs and token usage natively while supporting direct integration with Claude CLI or independent use

Use Cases

-

1

Production LLM Teams

Organizations using large language models as production infrastructure reduce API bills by 55-71 percent with structured prompts

-

2

Cost-Conscious Developers

Engineers with direct visibility into API spending optimize prompts locally to lower token waste and monthly costs

-

3

Model Evaluation Teams

Companies comparing multiple LLM providers can preview cost differences before committing to any execution

FAQ

Does Promptctl require internet or external API calls? ▾

How much can Promptctl reduce my LLM API costs? ▾

Which AI models can I compare costs across? ▾

How do I install Promptctl? ▾

Tech Stack & Tags

Discussion

No comments yet — be the first!

Join the conversation — sign up to comment.

Sign up free