Agentiqa — AI QA Testing Agent

Teams shipping web or mobile apps with limited QA headcount end up choosing between slow manual testing and brittle scri...

LumaEcho

Language learning has long suffered from a fundamental disconnect: most tools prioritize convenience over authenticity....

Best Engineering & Development Startups & Tools

Recently Listed

39 launches Featured

Featured

Teams shipping web or mobile apps with limited QA headcount end up choosing between slow manual testing and brittle scripted automation. Agentiqa eliminates that compromise by letting product managers or engineers paste a URL and have an autonomous AI act as a tireless human tester. The tool starts where most cloud services stop: it runs directly on the developer’s machine so localhost and internal staging environments are covered without any CI setup. That fact alone makes it indispensable for startups that push nightly builds to feature branches hidden behind firewalls. Beyond local support, the agent examines the rendered interface as a user would, relying on computer vision instead of brittle DOM selectors. Once it discovers a bug—visual glitches, broken states, or purely frustrating UX—it records a video, writes concise reproduction steps, and folds the new insight into a reusable QA plan. Each iteration refines the plan, making the test suite self-healing and continuously more valuable over time. Privacy concerns have been addressed head-on: source code never leaves the developer’s workstation, and credentials are encrypted so the AI can type a password without ever learning its value. Companies bound by GDPR, HIPAA, or internal compliance rules can therefore invite the agent onto sensitive apps without opening a proverbial back door. The product is offered as a downloadable desktop client, complemented by Agentiqa Web for cloud runs that can be triggered from any browser. Pricing or usage tiers are not yet disclosed, yet “no per-run cloud overhead” signals an approachable model for smaller teams, while local-first execution removes the queueing penalty that often sabotages fast iterations.

Configuring a fresh Mac is a repetitive slog. Every new machine means reinstalling Homebrew packages, copying dotfiles, adjusting system preferences, syncing hotkeys, and reconfiguring shell environments. For developers juggling multiple machines—whether freelancers working across client infrastructure or IT teams managing MDM-enrolled fleets—this overhead drains productivity and invites consistency errors. Mac-onboarding solves this by capturing an entire configuration state from one machine and replaying it on another with a single command. The export step archives 21 distinct configuration modules, spanning Homebrew packages, shell configs, system settings, application preferences, hotkeys, and dozens of specialized tools. The install step unpacks everything onto a fresh target Mac, automating what would otherwise require manual recreation. What distinguishes this tool from simpler dotfile repos or conventional configuration management approaches is its explicit respect for the constraints of managed environments. Organizations using Mobile Device Management to enforce security policies risk breaking enrollment if configuration tooling overwrites protected system defaults. Mac-onboarding acknowledges this friction—it explicitly refuses to touch settings that MDM controls, and it avoids migrating SSH keys that require careful per-environment handling. This pragmatism signals the tool was built by someone who has actually operated within corporate infrastructure, not just imagined it. Privacy is similarly foregrounded as a first-class concern rather than an afterthought. The entire workflow runs offline and locally. Secrets—API keys, git credentials, and other sensitive material extracted from shell configuration files—are automatically redacted before archiving, preventing accidental leakage. The archive is inspectable via standard tar utilities, giving users genuine transparency about what gets captured and stored. The product supports 21 modules covering major development tools (Kitty, Claude, Tailscale, OrbStack), utilities (Alfred, Synology, 1Password), and system-level preferences. A bridge mode allows pulling configuration directly from a source machine via Tailscale SSH, bypassing the archive step entirely for environments with direct network access. The tool is open source under the MIT license, available via Homebrew or direct download, and built as a single compiled binary with no runtime dependencies. There is no mention of pricing or proprietary licensing, confirming this is a free utility maintained by its creator for the developer community.

Teams that live inside Telegram, WhatsApp, Slack, or Discord spend their days dodging the accidental slog of opening yet another tab just to ask a bot for help. OpenClaw Direct dissolves that friction by putting a single, private AI coworker right where the messages already flow. Early adopters who lack the appetite—or hire—for DevOps but need Claude-grade intelligence on their own data can spin up a complete environment without writing a deployment script. The allure lies in the five-minute onboarding and the price lock of nineteen dollars a month, cancellable whenever the experiment loses its shine. Beyond provisioning, the platform behaves like an overstretched teammate who never forgets. It consumes inbox threads, staging deployments, support tickets, pull-request noise, SSL expirations, marketing figures, and half-written drafts, then surfaces only the decisions that still require human judgment. Code reviews happen in-chat, with critical issues patched and tests re-run before the reviewer reaches for coffee. Customer tickets get drafted replies, while feature requests bubble into a shared roadmap where community weight can be tracked with tags. Blog traffic gets analysed on the fly and turned into scheduled social threads with open rates reported back as early morning banter. Ownership stays with the customer: the assistant lives on a dedicated machine, listens exclusively to the API key they supply, and connects to the chat apps they already trust. Whatever internal context, documents, or repositories the team grants access to remains unseen by anyone else. The built-in dashboard simply tracks the number of messages, workflows completed, and time reclaimed—enough data to justify the monthly coffee budget the tool replaces.

Micro-service teams waste untold hours sweeping up stale containers, juggling Git resets, and hunting down “it works on my machine” gremlins; dcli compresses that busywork into three verb-heavy commands. The utility targets any developer who juggles Docker Compose stacks and multiple source repositories on a daily basis—essentially anyone who has cursed at a half-dead dev environment five minutes before stand-up. What elevates dcli above a dusty binder full of shell aliases is its ruthless focus on single-shot outcomes. Resetting state means one shot, one story: ask for “docker clean api web” and it tears down the listed containers, purges volumes, rebuilds images, and restarts only the services you name, while keeping persistent volumes intact. Repeat the same mindset on the Git side when you tell it to “git reset develop”; the CLI fetches upstream and snaps each configured repository onto the exact branch without you ever having to open another window. It reports successes and failures in terse, colored lines, sparing you the Kubernetes-grade prose dump. The binary is delivered via Homebrew on macOS and Linux, with direct executables for Windows, so onboarding is literally two shell commands and a version check. No setup dance, no cloud service to register—just fetch, drop in your PATH, and start pruning noise from local dev. Because the entire surface area is nine sub-commands wrapped in a Go binary, updates are equally light; a new tag shows up in the tap, you pull, done. No pricing information is surfaced on the landing page, nor are there reference to paid tiers or enterprise licensing; the code lives in a public GitHub repository and binaries are distributed free of charge today. That leaves room for future monetization, but right now the pitch is simple: dcli trades ceremony for speed, and if you live in Docker and Git all day, that trade is convincingly one-sided.

Managing API costs for AI coding tools is a practical concern developers face regularly. When integrating Claude, Codex, Z.ai, or Minimax into your workflow, exceeding your usage limit or hitting rate ceilings can disrupt development or trigger unexpected charges. Code Meter addresses this problem by delivering real-time usage monitoring in the macOS menu bar, giving developers visibility into consumption before issues occur. The product's core value is immediate and simple: install it, authenticate with your chosen provider, and see usage metrics without checking dashboards or guessing remaining capacity. Setup completes in seconds, and the app supports four major AI coding providers, making it relevant across different tool preferences. What distinguishes Code Meter is its privacy architecture. Rather than funneling credentials through intermediary services, the application reads credentials locally from macOS Keychain and communicates directly with each provider's API—Anthropic, OpenAI, Z.ai, or Minimax. Credentials never leave your device. Usage history stores locally via SwiftData, and widget data remains isolated in App Group containers. This design choice appeals to developers concerned about credential exposure, especially in regulated industries or security-sensitive environments. The privacy commitment extends to analytics. Code Meter uses PostHog for anonymous product telemetry—recording only app version, OS version, and feature interactions—hosted on EU Cloud infrastructure with IP capture and device fingerprinting disabled. It represents a transparent approach to usage analytics; the company documents what it collects and explicitly discloses why. The feature set covers essentials: the menu bar widget shows usage at a glance, additional widgets provide supplementary views, and historical charts enable tracking over time. Alerts flag overages before they compound. The product is a free download from the Mac App Store, requiring macOS 26 or later. RevenueCat infrastructure suggests potential premium features, though none are documented currently. Code Meter solves a concrete problem for developers managing multiple AI APIs with a privacy-first architecture that rejects the surveillance model prevalent in developer tools. Its strength lies in restrained functionality delivered without data extraction. Developers get visibility where it matters—their own usage—without surrendering credentials or behavioral data to another platform.

Building AI agents that can operate in the real world requires bridging the gap between digital systems and traditional communication channels. AgentCall solves a critical problem: enabling AI agents to interact via phone—both making outbound calls and receiving inbound communication—without the friction and failures that plague existing VoIP-based approaches. The core offering is elegant in scope. Developers provision real SIM-backed phone numbers through an API, connect their agents with a single API key, and receive all incoming calls and SMS messages through webhooks. The platform handles provisioning in seconds, supports country and capability selection, and guarantees that numbers pass strict platform verification checks that typically block VoIP alternatives. For AI agents, this means actually being able to register accounts, complete SMS-based verification flows, and operate in environments where traditional virtual numbers get rejected. What distinguishes AgentCall is how it handles the full communication stack. Voice calls aren't just passive; agents initiate outbound calls with AI-powered conversation using one of eight distinct voice options—from the neutral "Alloy" to the energetic "Shimmer"—each tuned for different contexts. The AI voice system accepts a system prompt and autonomously manages the conversation, returning a full transcript. This makes customer service outreach and verification workflows genuinely practical. On the messaging side, agents get a dedicated SMS inbox per number, send and receive messages, and automatically extract verification codes from incoming SMS, delivering them to webhook endpoints in real-time. The architecture reflects strong security thinking. Each agent gets its own isolated number, preventing compromise of one agent from cascading across others. The async, webhook-based design eliminates the need for persistent connections or complex state management. The platform supports diverse use cases: agents test SMS-based authentication on their own apps, run outbound calling campaigns with follow-up SMS, maintain two-way SMS conversations, and handle inbound calls through webhook forwarding. This breadth indicates the founders understood the landscape of agentic workflows rather than optimizing for a single scenario. The "Works with MCP" mention signals integration with the Anthropic Model Context Protocol, positioning AgentCall within the broader AI infrastructure stack. For developers building sophisticated AI agents that need reliable phone capabilities, AgentCall delivers what the market currently lacks—a practical alternative to the constraints and unreliability of virtual number services.

Evaluating AI infrastructure tools sprawls across dozens of specialized vendors, pricing models, and documentation sites, creating significant friction for teams assembling their tech stack. Infrabase.ai consolidates this fragmentation into a single directory organized by functional category—vector databases, prompt engineering tools, observability platforms, inference APIs, and more—making it possible to compare options within each domain without hunting across the web. The directory serves builders deciding which AI infrastructure components to adopt: founders prototyping at seed stage, engineering teams scaling inference and observability, and architects selecting vector database solutions. The categories span the full infrastructure stack, from foundational services like vectorization and embedding APIs to higher-order tools for prompt management, agent monitoring, and evaluation frameworks. What distinguishes Infrabase from generic tool aggregators is the specificity of its curation. Each category contains substantive options rather than purely aspirational listings. The directory emphasizes practical attributes: it flags open-source projects alongside commercial offerings, marks free trial availability, and acknowledges the diversity of deployment models—serverless, self-hosted, EU-sovereign—relevant to different organizational constraints. This matters because infrastructure decisions often turn on operational characteristics like data residency and cost scaling, not just feature parity. The founder built Infrabase from direct experience evaluating infrastructure for a real project, accumulating working lists of products and technical notes substantial enough to justify sharing. This origin explains the site's practical bias. Rather than listing every tangential tool, it focuses on products that demonstrably function within specific categories. The selection acknowledges that the AI infrastructure market extends far beyond dominant cloud providers, a reality that reshapes purchasing power for teams taking AI seriously. The directory's limitations stem from its breadth. With sixty-one inference APIs, twenty vector databases, and comparable volumes across categories, individual product comparisons flatten into metadata. Users cannot evaluate full feature matrices, benchmark results, or integration patterns within the directory itself. The site succeeds by redirecting focus to vendor pages rather than attempting comprehensive comparison. For teams in early evaluation stages this works appropriately; for detailed diligence it points the right direction without replacing specialized analysis.

Catching database performance regressions before they reach users requires both visibility into query execution and the discipline to enforce latency budgets. Queryd addresses this gap by instrumenting SQL queries in Node.js applications with measurable performance guardrails. The tool wraps database clients at multiple levels—supporting postgres.js tagged templates, raw query functions, or Prisma—to intercept queries and measure their execution time against configurable thresholds. The product solves a real pain point for teams building latency-sensitive applications. Query performance degrades gradually, and without systematic detection, slow queries often go unnoticed until they cause visible impact. Queryd brings three mechanisms to prevent this: per-query latency thresholds that flag individual slow queries, per-request query budgets that set cumulative limits on database work within a single user request, and sampling controls that keep observability costs minimal in production. What distinguishes queryd is its pragmatic design philosophy. Rather than requiring a complete database abstraction or architectural restructuring, it integrates at the query execution layer across multiple driver APIs. The sampling-first approach acknowledges that continuous monitoring of all queries in high-traffic applications becomes prohibitively expensive; instead, teams can set sampling rates to stay within their observability budget while still surfacing meaningful regressions. Optional EXPLAIN ANALYZE integration allows deeper investigation of offending queries when needed, shifting between cheap signal and expensive detail. The implementation provides useful context awareness through request-scoped budgets—tracking not just individual query times but also cumulative query volume and duration within a single request. This catches a different class of performance issues: endpoints that perform many quick queries instead of fewer optimized ones. The configurable sink architecture suggests thoughtful extensibility, allowing teams to route alerts to their existing monitoring systems rather than forcing a new workflow. As an early-stage open-source project, queryd makes a modest but useful contribution to the Node.js observability ecosystem. It fills a specific niche—SQL query latency monitoring with minimal overhead—without attempting to be a comprehensive database performance platform. Teams already running SQL databases in production and concerned with query regressions will find the tool immediately applicable to their latency budgeting workflow.

A Varanasi-based digital agency founded by Shashwat Maurya, Synor addresses a gap in the Indian software market where regional businesses need production-grade custom applications but have historically been forced to either hire expensive enterprise software houses or settle for template-based solutions. The agency's primary value is demonstrated through two live projects launched within six months of its founding. TheDawai is a full-stack pharmacy e-commerce platform paired with backend management software for the healthcare sector in Uttar Pradesh. Shivora Technologies operates as a multi-tenant school management system currently supporting five or more institutions with real-time data management across the state. Both systems handle production workloads—processing actual transactions, managing student and patient records, and supporting dozens of concurrent users continuously. What distinguishes Synor from the broader landscape of web agencies and freelancers in UP is the scope of what it builds. The deliverables are not websites, landing pages, or WordPress installations. Instead, Synor delivers systems designed to manage sensitive data reliably, operate under real load, and scale to institutional needs. The education and healthcare sectors demand this level of robustness, and the fact that both projects reached operational status in six months indicates engineering competence and execution efficiency uncommon in the regional market. The agency frames these two projects as proof of capability. For organizations in healthcare, education, or other sectors needing custom software, Synor claims it can deliver what previously required engagement with large enterprise vendors charging ₹20-50 lakhs over 18+ months. This represents a significant acceleration of both timeline and cost structure for institutions that historically had limited alternatives between expensive vendors and generic solutions. No specific pricing or business model details are disclosed in the available content. The agency operates on a project basis, handling the design, development, and deployment of domain-specific software platforms. For clients in UP's institutional and commercial sectors needing custom software built at industrial grade and delivered rapidly, Synor offers an alternative to both expensive enterprise consultancies and generic template solutions, backed by documented examples of execution.

Access to region-locked content and IP masking represent core use cases that Proxy Solutions addresses through a global proxy network. The service targets developers, marketers, data researchers, and network administrators who need reliable proxy infrastructure to bypass geographic restrictions or maintain privacy in their operations. The platform distinguishes itself through breadth rather than specialization. Instead of focusing on a single proxy category, Proxy Solutions bundles personal proxies, package proxies, mobile proxies, UDP proxies, and multi-protocol options alongside VPS and dedicated server infrastructure. The company maintains 200+ global locations sourced from legitimate internet service providers and carriers worldwide, with individual endpoints distributed across different geographic regions and IP ranges. Technical execution prioritizes stability. The service claims 99.97% uptime with continuous equipment monitoring and proxy throughput reaching 100 MB/sec. Authentication supports both credential-based and IP-based approaches, with HTTP/HTTPS and SOCKS5 connection types available. This flexibility accommodates diverse integration scenarios across applications and workflows without forcing users into a single architectural choice. Automation drives user onboarding. Proxies appear in personal dashboards immediately after payment, and an API enables programmatic ordering and management for developers. Multi-channel support through website and messenger-based bots reduces friction compared to traditional ticketing systems. The platform provides round-the-clock support across issue complexities. Pricing strategy emphasizes accessibility. Purchases range from single IP addresses to tens of thousands, with subscription periods spanning one month through extended terms featuring automatic renewal. A 25% affiliate commission incentivizes reseller partnerships. A refund guarantee backs service delivery claims if proxies fail to provision. The service succeeds in consolidating infrastructure. Users seeking only proxies might explore specialists, but organizations wanting integrated proxy, VPS, and dedicated server options under one vendor find consolidated management valuable. The geographic scale and uptime metrics position this as infrastructure-grade rather than consumer-tier, though the proxy market remains crowded with competitors offering similar technical baselines. Proxy Solutions' primary differentiation rests on coverage breadth combined with automated provisioning and multi-protocol flexibility. These factors address operational complexity for organizations running distributed infrastructure, but they represent incremental improvements rather than fundamental advantages over established competitors in this category.

Infrastructure teams managing Zabbix monitoring systems face a persistent challenge: critical alerts get lost in noise or delayed in reaching the right people. NZBX addresses this by channeling Zabbix notifications through WhatsApp, transforming a ubiquitous messaging platform into a real-time incident command center. The product targets DevOps and infrastructure teams already running Zabbix but wanting faster, more direct alert delivery. Instead of checking dashboards or waiting for email, incidents appear instantly in WhatsApp where team members already spend their working day. What distinguishes NZBX is its simplicity and speed. The service requires no server installation—it connects to existing Zabbix instances through API authentication and delivers alerts in under three seconds. Setup takes five minutes, placing it at the low-friction end of the integration spectrum. End-to-end encryption and stated LGPD compliance address data security concerns when routing infrastructure alerts through third-party services. Beyond basic alerting, NZBX includes a dashboard for tracking metrics, interactive graphs, detailed reports, and data export. An AI-powered grouping system suppresses redundant alerts, with the platform claiming an 80 percent noise reduction. The service supports multiple Zabbix instances, granular user permissions, and access logging, indicating it's built for teams rather than solo operators. The stated 99.9 percent availability target and 24/7 support position it as infrastructure-grade tooling. The integration strategy extends beyond Zabbix. The platform mentions compatibility with webhooks, GPT integration, and other monitoring tools, suggesting a broader alert aggregation roadmap. Up to 50 simultaneous users can access the system, and documentation appears comprehensive. Pricing remains opaque. The site emphasizes free trials and no installation requirements but provides no transparent pricing details. For teams drowning in Zabbix alert fatigue, NZBX offers a pragmatic shortcut to faster incident response. The product's actual value depends on execution—whether the sub-three-second delivery consistently holds and whether AI-powered grouping reduces signal loss rather than suppressing critical alerts. These are testable claims worth validating before committing a team to the platform.

Automating the conversion of visual designs into functional code addresses a genuine pain point in modern development workflows. Screenshot to Code targets developers and designers grappling with design-to-development handoffs, whether that's individuals prototyping quickly or teams moving designs from Figma into production applications. The tool eliminates hours of manual HTML, CSS, and JavaScript work required to match mockups pixel-for-pixel. What distinguishes this product is its range of framework support and execution speed. Rather than locking users into a single output format, Screenshot to Code generates code across multiple paradigms: vanilla HTML and CSS, React with JSX and TypeScript support, Vue single-file components, Next.js components, Tailwind CSS utility classes, Bootstrap, Ionic, and SVG. This flexibility means developers can feed it a screenshot and receive output in their framework of choice. The core technology uses AI-powered visual recognition to identify UI components—buttons, forms, navigation menus, cards, images—with the precision required for production work. It reconstructs these elements while preserving layout, spacing, typography, colors, and responsive breakpoints exactly as they appear in the original design. Users can upload PNG, JPG, or WebP files from any source: website screenshots, Figma designs, Sketch mockups, or hand-drawn wireframes. The tool outputs semantic, well-structured code suitable for direct integration into projects. Generated code is downloaded or copied directly to the clipboard. What the tool notably doesn't do is generate application logic or backend integration—it strictly converts visual elements to front-end code. Developers still need to wire up interactivity and data flows themselves. The product operates on a credit-based system, with each conversion consuming a fixed number of credits, though explicit pricing details aren't available. The value proposition is straightforward: it removes the bottleneck of translating visual designs into responsive, semantic code. For teams with heavy design-to-code workflows, that efficiency gain is meaningful. The tool's real-world effectiveness ultimately depends on how it handles complex nested layouts and edge cases beyond simple UI patterns.

Everyday problems rarely deserve complicated solutions, and this collection of online utilities recognizes that insight with practical precision. The platform consolidates a diverse range of free calculators and converters into a single, searchable interface—tools for home improvement, pet care, student academics, personal finance, and health. Users access everything without registration and without the typical clutter that burdens many productivity sites. The breadth of offerings is genuinely thoughtful. Rather than stopping at generic calculators, the site includes specialized tools for specific audiences: VTU SGPA and CGPA calculators for Indian engineering students, a dog feeding guide calibrated by weight and age, an ovulation predictor for family planning, and a tile calculator for construction projects. This specificity signals a design philosophy oriented toward solving real, contextual problems rather than chasing viral adoption through novelty. Developer-focused tools like a JSON-to-CSV converter and regex tester with live match highlighting serve technical professionals, while a Unix timestamp converter that displays results across 30 timezones demonstrates attention to detail beyond the bare minimum. A currency converter supporting 160+ currencies with rates updated every six hours provides genuine utility for anyone managing international finances or travel. The inclusion of a pomodoro timer and sleep cycle calculator suggests the creators understand that productivity and wellness tools often belong together in daily workflows. The interface design prioritizes speed and discoverability. A search function lets users locate tools by keyword, and categorical organization reduces browsing friction. Tools load instantly, deliver results immediately, and make no demands on user attention beyond the core task. The repeated emphasis on no registration creates a clear market positioning against convenience friction as much as against feature depth. What remains unstated is how the operation sustains itself. No pricing information appears in the available content, and the decision to remain entirely free—with no visible premium tier or account-based features—leaves the business model unclear. This gap between user value and revenue mechanics warrants scrutiny before building significant reliance on the platform's continued operation. For users seeking straightforward tools that solve specific, immediate problems without registration overhead, the platform delivers on its promise. The combination of breadth, specificity, and polish positions it as a genuine alternative to scattered single-purpose websites or feature-bloated all-in-one suites.

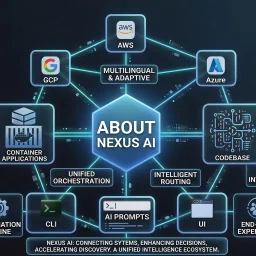

Automating the path from AI-generated code to production deployment addresses a real friction point for development teams. As AI coding assistants become standard tools in most engineering workflows, the challenge of taking those suggestions and deploying them with confidence to live infrastructure has become increasingly pressing. NEXUS AI targets this specific gap with a platform designed to streamline the journey from prompt to production application. The founding insight—that turning AI-generated code into production-ready applications should require minimal friction—reflects a genuine workflow problem. Teams today use AI to prototype and scaffold code, but translating those outputs into deployed services requires orchestrating containerization, cloud infrastructure, monitoring, and observability. NEXUS AI consolidates these typically fragmented steps. The platform's core value proposition centers on instant deployment across major cloud providers. By supporting AWS, Google Cloud, and Azure, it avoids lock-in and lets teams choose their preferred infrastructure. More importantly, it abstracts away the operational complexity that normally accompanies deployment, which matters when the goal is velocity—getting AI-generated code into users' hands quickly to validate whether it actually solves the intended problem. Built-in observability represents a critical feature choice. Deploying code without visibility into its runtime behavior is risky, particularly when that code originated from AI systems. By including monitoring and observability from the start, the platform helps teams catch regressions and understand performance characteristics in production rather than discovering problems after incidents occur. The positioning targets teams already embedded in AI-assisted development workflows. This includes startups using AI to accelerate product development, established engineering teams exploring generative coding tools, and organizations looking to compress their code-to-deployment cycle. For these groups, the appeal lies not in managing individual cloud services but in removing intermediate manual steps that create delays and opportunities for misconfiguration. The critical question for potential users is whether the platform's abstraction layer and automatic deployment strategy align with their security, compliance, and architectural requirements. Some teams may find the instant-deployment approach refreshing; others operating under strict controls may find it too opinionated. But for teams prioritizing speed and developer experience in environments where that tradeoff makes sense, the problem NEXUS AI solves is both real and increasingly relevant.

Unified monitoring for SQL Server and Windows infrastructure remains fragmented for many organizations, with teams juggling multiple tools to track database performance, server health, and compliance needs. SQL Planner attempts to consolidate these oversight responsibilities into a single platform, targeting IT directors, database administrators, and system admins who spend significant resources managing sprawling database environments across networks. The platform's core strength lies in its integrated approach. Rather than forcing teams to piece together separate monitoring solutions, it combines SQL performance tracking, Windows server metrics, security auditing, and automated backup capabilities under one interface. The web-based architecture supports browser and mobile access, addressing the practical reality that modern ops teams need visibility from anywhere. For organizations running SQL Express instances or development environments with licensing restrictions, the agentless monitoring approach offers particular advantages by avoiding additional agent overhead on constrained systems. Diagnostics appear central to the product's value proposition. The platform advertises over 100 analytical reports alongside real-time query execution tracking and wait analysis, positioning it as a tool for rapid root-cause investigation rather than just metric collection. The inclusion of advanced query mining and deadlock analysis suggests it targets performance-sensitive environments where optimizing expensive queries directly impacts business outcomes. The security auditing module, which tracks DDL changes, login anomalies, and administrative actions, makes the platform relevant for regulated industries where comprehensive audit trails matter. The feature set addresses recognizable operational pain points: backup reliability with object-level recovery options, centralized event log management across multiple servers, and automated intelligence for shift handoff documentation. For service providers managing multi-tenant or multi-customer environments, the unified management interface across diverse networks could simplify operations. Notably, the company claims a free enterprise edition that monitors unlimited Windows servers and up to 100 SQL instances, removing traditional per-server licensing costs entirely. This pricing model, if accurate, represents a significant departure from enterprise monitoring conventions. The stated efficiency claims—reducing mean time to recovery by 50 to 80 percent and lowering total cost of ownership significantly against alternatives—remain ambitious assertions common to monitoring platforms, though the specific benchmarks presented aren't independently verified. The platform's ability to compete against established players like Datadog hinges on whether its unified SQL and Windows focus delivers materially better diagnostics for database-centric organizations than generalist monitoring solutions, and whether its lower-cost positioning doesn't compromise on scalability or reliability.

Full-stack development has long required juggling separate codebases, build systems, and deployment targets—one for web, another for mobile, yet another for the backend API. Eden Stack collapses this friction by offering a unified SaaS starter kit designed for teams building multi-platform applications where speed and code consistency matter. The core promise is straightforward: developers get a single codebase that spans web and native mobile frontends, a type-safe API layer, and integrated AI capabilities—all with transparent, auditable source code. The "no lock-in" positioning is deliberate; founders can fork the project entirely, own the infrastructure, and modify anything without vendor dependency. What distinguishes this offering is the depth of integration rather than breadth. The kit ships with over 60 UI primitives and 40 Claude-powered skills, which amounts to pre-built AI agent behaviors that developers can invoke from the chat interface. The demo screenshots show an AI assistant querying databases, triggering email sends via Resend, and scheduling delayed jobs through Inngest—actions chained together with Claude reasoning in the loop. This isn't a generic chatbot wrapper; the architecture treats Claude as a controllable execution layer tied to your application's own backend. The type-safety story runs throughout. Eden uses Elysia for the API layer with a pattern called Eden Treaty to ensure types flow consistently between frontend and backend, reducing the runtime surprises that plague many full-stack projects. Authentication, business logic, and data schemas share definitions across all three tier—web, mobile, and API. The included demo is functional enough to reveal the intended workflow. It showcases onboarding flows, API rate limiting, Stripe webhook handling, email template rendering, and session management—genuine infrastructure concerns rather than trivial examples. These patterns suggest the kit targets founders and small teams shipping real SaaS products, not tutorial projects. Pricing follows a typical early-access model: the EARLYBIRD discount offers 50% off at $99 per license, though the full pricing structure beyond this limited cohort isn't detailed in the available content. The scarcity messaging (14 spots claimed) is standard founder playbook, but the pricing anchor itself is reasonable for a full-stack template with this level of integration. Eden Stack is fundamentally a bet that developers would rather own and customize their SaaS foundation than stay locked into a platform. For teams shipping multi-platform applications and willing to maintain their own deployment, this approach trades platform convenience for sovereignty and flexibility.

A significant shift in the SQL IDE landscape materialized when Microsoft retired Azure Data Studio in February 2026, creating an immediate need for a robust alternative. Jam SQL Studio has positioned itself directly into this market gap, offering a modern SQL development environment purpose-built for an AI-first workflow rather than as a retrofitted legacy tool. What distinguishes this product from traditional SQL IDEs is its native integration with AI agents through the Model Context Protocol (MCP) framework, combined with an embedded Claude Code CLI. For database engineers and DevOps professionals who increasingly rely on AI-powered coding assistance, this foundation represents a meaningful departure from competitors still bolting on AI as an afterthought. The product supports an impressively broad database ecosystem—SQL Server, PostgreSQL, MySQL, MariaDB, Oracle, and SQLite—making it genuinely cross-platform in capability. The feature set covers core IDE expectations: SQL notebooks with .ipynb compatibility, intelligent code completion, visual execution plan analysis, built-in charting, and schema comparison. Beyond these fundamentals, Jam SQL Studio includes DBA-focused tooling like session management and performance monitoring across multiple database engines. For teams transitioning from Azure Data Studio, the migration path is straightforward since existing query files, notebooks, and credentials transfer directly. The pricing model emphasizes accessibility. The tool is free for personal use with no registration requirement, which is particularly significant for developers evaluating alternatives or maintaining home lab environments. This freemium approach removes friction from adoption and creates a clear upgrade path for organizations needing advanced capabilities. Where the product strategy becomes clear is in its timing and positioning. Rather than competing head-to-head on feature parity with established tools like DataGrip or DBeaver, Jam SQL Studio has recognized an underserved segment: developers who need SQL IDE functionality integrated with modern AI-agent development workflows. The MCP support and Claude integration specifically target this audience, while maintaining compatibility with traditional SQL development for those who don't need AI-enhanced features. The main question for potential adopters is whether a relatively new entrant can maintain feature parity across such a broad database support matrix while simultaneously developing its AI capabilities. Nevertheless, by capturing users displaced from Azure Data Studio's retirement, Jam SQL Studio has secured an initial user base with genuine switching motivation rather than relying purely on feature advantages.

Registration fraud remains a persistent headache for online platforms, with disposable email services making it trivial for bad actors to bypass traditional signup safeguards. Pyzit addresses this vulnerability head-on with an API designed to identify and filter out temporary email addresses before they compromise user databases or inflate signup metrics with worthless accounts. The core value proposition centers on speed and simplicity. Rather than forcing platform operators to manually curate blocklists or implement homegrown detection logic, Pyzit commoditizes the detection process into a straightforward API call. This positions it squarely as infrastructure for companies managing any form of user registration—marketplaces, SaaS products, community platforms, or content networks where user quality directly impacts unit economics or operational burden. What distinguishes Pyzit in a crowded space is its aggressive pricing strategy. The service is entirely free to begin with, eliminating the friction that typically prevents small teams or bootstrapped startups from adopting fraud prevention tools. This freemium model removes a major barrier to entry and allows operators to validate whether disposable email detection actually matters for their use case before committing budget. Many fraud prevention vendors lock basic features behind paywalls; Pyzit's willingness to give away the core capability suggests confidence in its utility and a bet that usage volume will eventually drive monetization. The specifics on how Pyzit's detection engine works remain opaque from the available material. The product emphasizes being "fast" and "reliable," which are table-stakes claims for an API but nonetheless important ones—a detection service that introduces latency into signup flows or generates false positives becomes a liability rather than an asset. The implementation approach, coverage breadth, and false-positive rate are all relevant questions that potential users would need answered during evaluation. From a product standpoint, Pyzit tacitly acknowledges that disposable email detection is only one vector in the broader fraud picture. Comprehensive signup protection typically requires layering multiple signals—IP reputation, phone verification, behavioral analysis—but carving out this narrow problem and solving it well represents solid product focus. The platform appears oriented toward developers, suggesting an emphasis on integration ease and documentation quality, though this remains difficult to assess from the available information. For operators struggling with low-quality signups or artificial metrics inflation, Pyzit offers a narrowly targeted solution with low friction to adoption. Whether it justifies ongoing usage will ultimately depend on how meaningfully disposable emails contribute to each platform's specific fraud profile.

Developers working with JSON data across various formats face a persistent friction point: the need to quickly format, validate, and convert JSON without compromising privacy or navigating authentication barriers. JSONFormatters.com directly addresses this by offering a browser-native toolkit that eliminates both the signup requirement and the server-side data transmission that makes many alternative tools a risky proposition for sensitive information. The platform's differentiation centers on its privacy architecture. Rather than following the conventional SaaS model of storing user input on remote servers, the tool executes entirely within the browser, meaning JSON data never leaves a user's device. This matters considerably for developers handling API keys, customer records, or proprietary configuration files—common scenarios where uploading to third-party services introduces unacceptable security exposure. The trade-off of pure client-side processing is transparent and intentional. Feature breadth extends beyond simple prettification. The tool includes real-time validation with error detection, minification for production optimization, and a conversion suite spanning XML, YAML, CSV, SQL, Excel, HTML tables, and plain text formats. A tree viewer presents JSON hierarchically for intuitive navigation through nested structures, while a diff tool enables side-by-side file comparison. Keyboard shortcuts surface power-user workflows, and dark mode support addresses the practical consideration of extended use. The audience encompasses developers who regularly transform data formats—particularly those working with legacy systems, configuration management tools like Kubernetes and Docker Compose, or tabular export workflows. Data analysts converting JSON-formatted API responses into spreadsheet-friendly formats will find the CSV conversion particularly relevant. Students learning data transformation concepts benefit from the no-friction entry point. The product succeeds at restraint. It focuses on JSON manipulation without attempting broader feature creep into unrelated development utilities. The feature set is intelligently scoped rather than bloated. No pricing information is disclosed in the product messaging, leaving the monetization approach opaque. For developers operating in security-conscious environments, this browser-based approach to routine data transformation represents a compelling alternative to conventional web-based JSON tools that require data submission to external servers.

Automating document generation has long been a pain point for businesses that need to produce high volumes of personalized outputs—invoices, contracts, certificates, and similar documents that require individual customization but follow standardized formats. PDFOutput addresses this friction by creating a bridge between two widely-used platforms: Google Docs for template design and Notion's database capabilities for data management. The core workflow is straightforward and practical. Users design a Google Document with placeholder variables, then connect it to a Notion database containing the information that should populate each field. The system handles the rest, generating individualized PDFs at scale without requiring users to manually merge data or use complex programming logic. This approach makes document automation accessible to non-technical teams—a significant advantage over traditional mail merge tools or custom integration solutions. What distinguishes PDFOutput from simpler alternatives is its focus on the complete document lifecycle. Rather than limiting functionality to basic text substitution, it targets a diverse range of use cases: operational documents like reports and invoices, contractual materials, achievement certificates, and commercial quotes. This breadth suggests the platform is designed for teams across multiple departments and verticals, whether they're in finance, operations, HR, or sales. The templating model itself deserves attention. Google Docs is familiar to nearly every business user, eliminating the learning curve associated with specialized template languages. Notion databases provide a structured, visual way to manage the source data without requiring spreadsheet expertise or database administration. By leveraging tools people already know, PDFOutput reduces adoption friction and makes it feasible for small teams to implement without dedicated technical support. The automation angle is crucial for the target market. Generating documents at scale—whether that means hundreds of customer invoices monthly or thousands of certificates for program participants—shifts from a tedious manual process to a reliable, repeatable workflow. This is valuable not just for efficiency but for consistency and compliance, ensuring every generated document maintains the same structure and formatting. The integration between these three components—Google Docs, Notion, and PDF output—is presented as seamless, though the actual depth of that integration would become clearer through hands-on use. For organizations already invested in either Notion or Google Workspace, this positioning makes natural sense as an extension of existing tooling rather than introducing a completely new platform into the stack.